educoder平台MapReduce基础实战.docx

《educoder平台MapReduce基础实战.docx》由会员分享,可在线阅读,更多相关《educoder平台MapReduce基础实战.docx(10页珍藏版)》请在冰豆网上搜索。

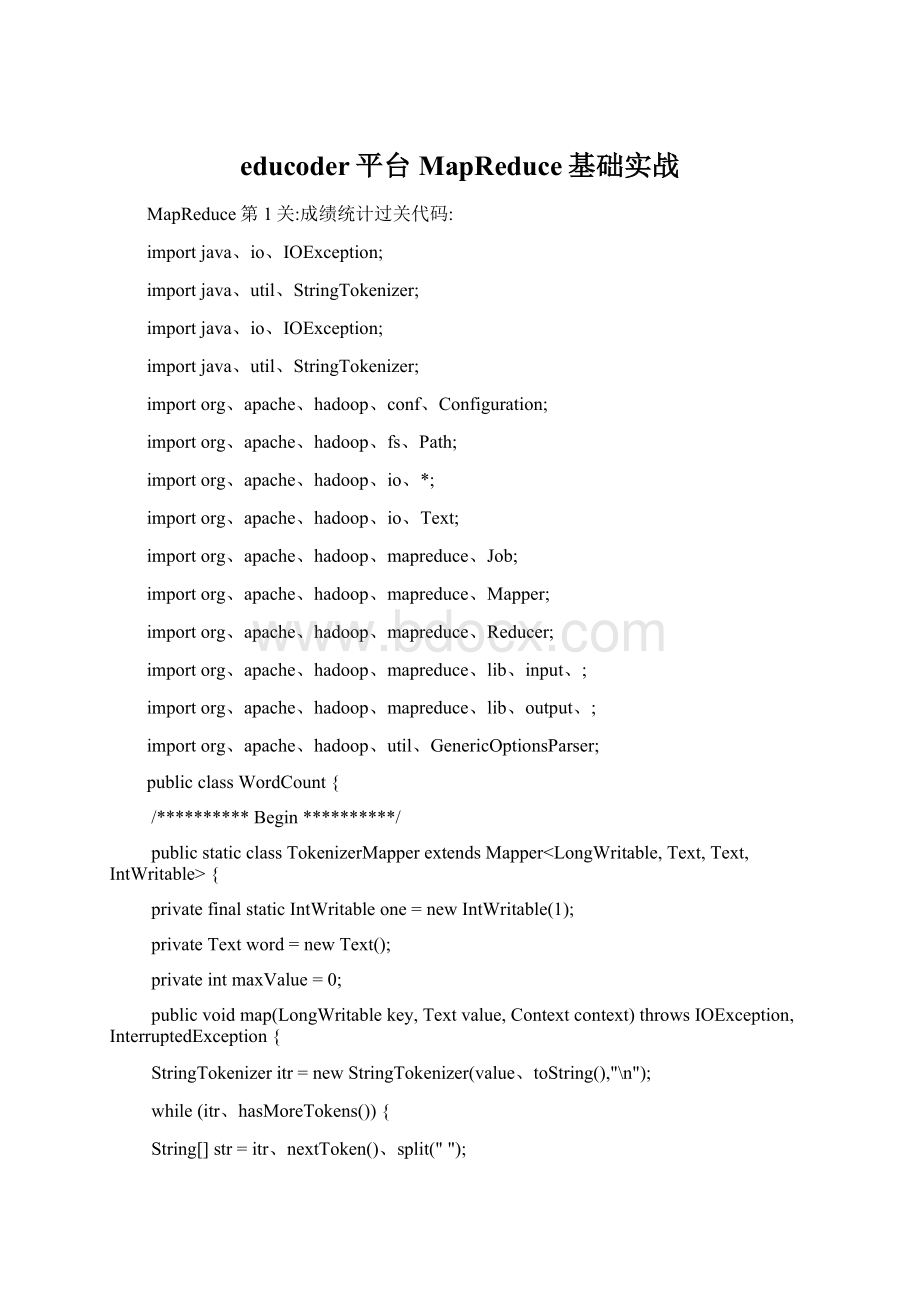

educoder平台MapReduce基础实战

MapReduce第1关:

成绩统计过关代码:

importjava、io、IOException;

importjava、util、StringTokenizer;

importjava、io、IOException;

importjava、util、StringTokenizer;

importorg、apache、hadoop、conf、Configuration;

importorg、apache、hadoop、fs、Path;

importorg、apache、hadoop、io、*;

importorg、apache、hadoop、io、Text;

importorg、apache、hadoop、mapreduce、Job;

importorg、apache、hadoop、mapreduce、Mapper;

importorg、apache、hadoop、mapreduce、Reducer;

importorg、apache、hadoop、mapreduce、lib、input、;

importorg、apache、hadoop、mapreduce、lib、output、;

importorg、apache、hadoop、util、GenericOptionsParser;

publicclassWordCount{

/**********Begin**********/

publicstaticclassTokenizerMapperextendsMapper{

privatefinalstaticIntWritableone=newIntWritable

(1);

privateTextword=newText();

privateintmaxValue=0;

publicvoidmap(LongWritablekey,Textvalue,Contextcontext)throwsIOException,InterruptedException{

StringTokenizeritr=newStringTokenizer(value、toString(),"\n");

while(itr、hasMoreTokens()){

String[]str=itr、nextToken()、split("");

Stringname=str[0];

one、set(Integer、parseInt(str[1]));

word、set(name);

context、write(word,one);

}

//context、write(word,one);

}

}

publicstaticclassIntSumReducerextendsReducer{

privateIntWritableresult=newIntWritable();

publicvoidreduce(Textkey,Iterablevalues,Contextcontext)

throwsIOException,InterruptedException{

intmaxAge=0;

intage=0;

for(IntWritableintWritable:

values){

maxAge=Math、max(maxAge,intWritable、get());

}

result、set(maxAge);

context、write(key,result);

}

}

publicstaticvoidmain(String[]args)throwsException{

Configurationconf=newConfiguration();

Jobjob=newJob(conf,"wordcount");

job、setJarByClass(WordCount、class);

job、setMapperClass(TokenizerMapper、class);

job、setbinerClass(IntSumReducer、class);

job、setReducerClass(IntSumReducer、class);

job、setOutputKeyClass(Text、class);

job、setOutputValueClass(IntWritable、class);

Stringinputfile="/user/test/input";

StringoutputFile="/user/test/output/";

(job,newPath(inputfile));

(job,newPath(outputFile));

job、waitForpletion(true);

/**********End**********/

}

}

命令行

touchfile01

echoHelloWorldByeWorld

catfile01

echoHelloWorldByeWorld>file01

catfile01

touchfile02

echoHelloHadoopGoodbyeHadoop>file02

catfile02

start-dfs、sh

hadoopfs-mkdir/usr

hadoopfs-mkdir/usr/input

hadoopfs-ls/usr/output

hadoopfs-ls/

hadoopfs-ls/usr

hadoopfs-putfile01/usr/input

hadoopfs-putfile02/usr/input

hadoopfs-ls/usr/input

测评

——————————————————————————————————

MapReduce第2关:

文件内容合并去重代码

importjava、io、IOException;

importjava、util、*;

importorg、apache、hadoop、conf、Configuration;

importorg、apache、hadoop、fs、Path;

importorg、apache、hadoop、io、*;

importorg、apache、hadoop、mapreduce、Job;

importorg、apache、hadoop、mapreduce、Mapper;

importorg、apache、hadoop、mapreduce、Reducer;

importorg、apache、hadoop、mapreduce、lib、input、;

importorg、apache、hadoop、mapreduce、lib、output、;

importorg、apache、hadoop、util、GenericOptionsParser;

publicclassMerge{

/**

*paramargs

*对A,B两个文件进行合并,并剔除其中重复得内容,得到一个新得输出文件C

*/

//在这重载map函数,直接将输入中得value复制到输出数据得key上注意在map方法中要抛出异常:

throwsIOException,InterruptedException

/**********Begin**********/

publicstaticclassMapextendsMapper

{

protectedvoidmap(LongWritablekey,Textvalue,Mapper、Contextcontext)

throwsIOException,InterruptedException{

Stringstr=value、toString();

String[]data=str、split("");

Textt1=newText(data[0]);

Textt2=newText(data[1]);

context、write(t1,t2);

}

}

/**********End**********/

//在这重载reduce函数,直接将输入中得key复制到输出数据得key上注意在reduce方法上要抛出异常:

throwsIOException,InterruptedException

/**********Begin**********/

publicstaticclassReduceextendsReducer

{

protectedvoidreduce(Textkey,Iterablevalues,Reducer、Contextcontext)

throwsIOException,InterruptedException{

Listlist=newArrayList<>();

for(Texttext:

values){

Stringstr=text、toString();

if(!

list、contains(str)){

list、add(str);

}

}

Collections、sort(list);

for(Stringtext:

list){

context、write(key,newText(text));

}

}

/**********End**********/

}

publicstaticvoidmain(String[]args)throwsException{

Configurationconf=newConfiguration();

Jobjob=newJob(conf,"wordcount");

job、setJarByClass(Merge、class);

job、setMapperClass(Map、class);

job、setbinerClass(Reduce、class);

job、setReducerClass(Reduce、class);

job、setOutputKeyClass(Text、class);

job、setOutputValueClass(Text、class);

StringinputPath="/user/tmp/input/";//在这里设置输入路径

StringoutputPath="/user/tmp/output/";//在这里设置输出路径

(job,newPath(inputPath));

(job,newPath(outputPath));

System、exit(job、waitForpletion(true)?

0:

1);

}

}

测评

———————————————————————————————————————

MapReduce第3关:

信息挖掘-挖掘父子关系代码

importjava、io、IOException;

importjava、util、*;

importorg、apache、hadoop、conf、Configuration;

importorg、apache、hadoop、fs、Path;

importorg、apache、hadoop、io、IntWritable;

importorg、apache、hadoop、io、Text;

importorg、apache、hadoop、mapreduce、Job;

importorg、apache、hadoop、mapreduce、Mapper;

importorg、apache、hadoop、mapreduce、Reducer;

importorg、apache、hadoop、mapreduce、lib、input、;

importorg、apache、hadoop、mapreduce、lib、output、;

importorg、apache、hadoop、util、GenericOptionsParser;

publicclasssimple_data_mining{

publicstaticinttime=0;

/**

*paramargs

*输入一个child-parent得表格

*输出一个体现grandchild-grandparent关系得表格

*/

//Map将输入文件按照空格分割成child与parent,然后正序输出一次作为右表,反序输出一次作为左表,需要注意得就是在输出得value中必须加上左右表区别标志

publicstaticclassMapextendsMapper{

publicvoidmap(Objectkey,Textvalue,Contextcontext)throwsIOException,InterruptedException{

/**********Begin**********/

Stringchild_name=newString();

Stringparent_name=newString();

Stringrelation_type=newString();

Stringline=value、toString();

inti=0;

while(line、charAt(i)!

=''){

i++;

}

String[]values={line、substring(0,i),line、substring(i+1)};

if(values[0]、pareTo("child")!

=0){

child_name=values[0];

parent_name=values[1];

relation_type="1";//左右表区分标志

context、write(newText(values[1]),newText(relation_type+"+"+child_name+"+"+parent_name));

//左表

relation_type="2";

context、write(newText(values[0]),newText(relation_type+"+"+child_name+"+"+parent_name));

//右表

}

/**********End**********/

}

}

publicstaticclassReduceextendsReducer{

publicvoidreduce(Textkey,Iterablevalues,Contextcontext)throwsIOException,InterruptedException{

/**********Begin**********/

if(time==0){//输出表头

context、write(newText("grand_child"),newText("grand_parent"));

time++;

}

intgrand_child_num=0;

Stringgrand_child[]=newString[10];

intgrand_parent_num=0;

Stringgrand_parent[]=newString[10];

Iteratorite=values、iterator();

while(ite、hasNext()){

Stringrecord=ite、next()、toString();

intlen=record、length();

inti=2;

if(len==0)continue;

charrelation_type=record、charAt(0);

Stringchild_name=newString();

Stringparent_name=newString();

//获取value-list中value得child

while(record、charAt(i)!

='+'){

child_name=child_name+record、charAt(i);

i++;

}

i=i+1;

//获取value-list中value得parent

while(iparent_name=parent_name+record、charAt(i);

i++;

}

//左表,取出child放入grand_child

if(relation_type=='1'){

grand_child[grand_child_num]=child_name;

grand_child_num++;

}

else{//右表,取出parent放入grand_parent

grand_parent[grand_parent_num]=parent_name;

grand_parent_num++;

}

}

if(grand_parent_num!

=0&&grand_child_num!

=0){

for(intm=0;mfor(intn=0;ncontext、write(newText(grand_child[m]),newText(grand_parent[n]));

//输出结果

}

}

}

/**********End**********/

}

}

publicstaticvoidmain(String[]args)throwsException{

//TODOAuto-generatedmethodstub

Configurationconf=newConfiguration();

Jobjob=Job、getInstance(conf,"Singletablejoin");

job、setJarByClass(simple_data_mining、class);

job、setMapperClass(Map、class);

job、setReducerClass(Reduce、class);

job、setOutputKeyClass(Text、class);

job、setOutputValueClass(Text、class);

StringinputPath="/user/reduce/input";//设置输入路径

StringoutputPath="/user/reduce/output";//设置输出路径

(job,newPath(inputPath));

(job,newPath(outputPath));

System、exit(job、waitForpletion(true)?

0:

1);

}

}

测评