神经网络BP算法的C语言实xian 2Word文件下载.docx

《神经网络BP算法的C语言实xian 2Word文件下载.docx》由会员分享,可在线阅读,更多相关《神经网络BP算法的C语言实xian 2Word文件下载.docx(12页珍藏版)》请在冰豆网上搜索。

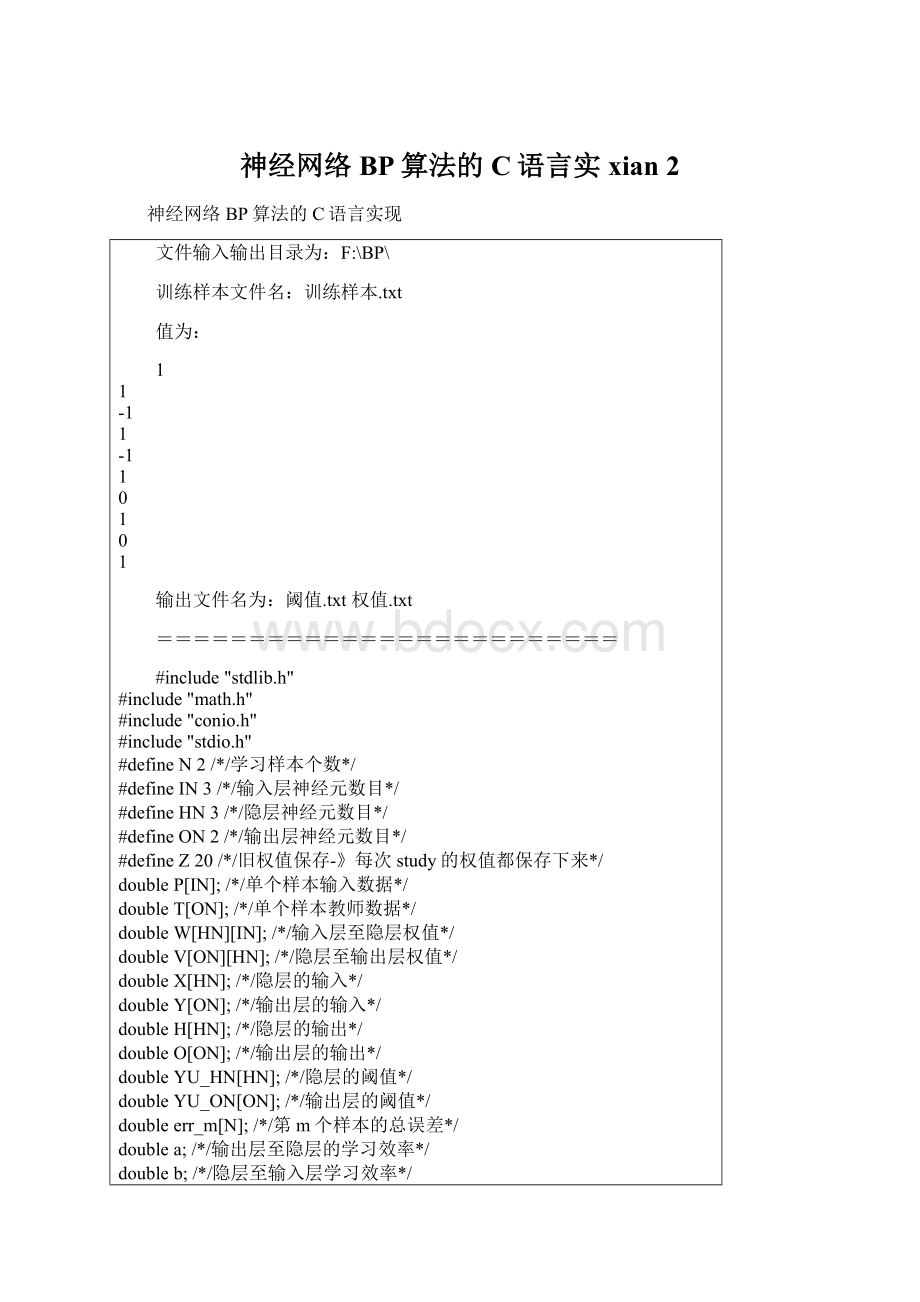

doubleP[IN];

/*/单个样本输入数据*/

doubleT[ON];

/*/单个样本教师数据*/

doubleW[HN][IN];

/*/输入层至隐层权值*/

doubleV[ON][HN];

/*/隐层至输出层权值*/

doubleX[HN];

/*/隐层的输入*/

doubleY[ON];

/*/输出层的输入*/

doubleH[HN];

/*/隐层的输出*/

doubleO[ON];

/*/输出层的输出*/

doubleYU_HN[HN];

/*/隐层的阈值*/

doubleYU_ON[ON];

/*/输出层的阈值*/

doubleerr_m[N];

/*/第m个样本的总误差*/

doublea;

/*/输出层至隐层的学习效率*/

doubleb;

/*/隐层至输入层学习效率*/

doublealpha;

/*/动量因子,改进型bp算法使用*/

doubled_err[ON];

FILE*fp;

/*定义一个放学习样本的结构*/

struct{

doubleinput[IN];

doubleteach[ON];

}Study_Data[N];

/*改进型bp算法用来保存每次计算的权值*/

doubleold_W[HN][IN];

doubleold_V[ON][HN];

}Old_WV[Z];

intStart_Show()

{

clrscr();

printf("

\n

***********************\n"

);

*Welcometouse*\n"

*thisprogramof*\n"

*calculatingtheBP*\n"

*model!

*\n"

*Happyeveryday!

***********************\n"

\n\nBeforestarting,pleasereadthefollows

carefully:

\n\n"

1.PleaseensurethePathofthe'

训练样

本.txt'

(xunlianyangben.txt)is\ncorrect,like'

\BP\训练样

!

\n"

2.Thecalculatingresultswillbesavedinthe

Pathof'

\\BP\\'

3.Theprogramwillload10dataswhenrunning

from'

\\BP\\训练样本.txt'

4.TheprogramofBPcanstudyitselfforno

morethan30000times.\nAndsurpassingthenumber,the

programwillbeendedbyitselfin\npreventingrunning

infinitelybecauseoferror!

\n\n\n"

Nowpressanykeytostart...\n"

getch();

}

intEnd_Show()

\n\n----------------------------------------------

-----\n"

Theprogramhasreachedtheendsuccessfully!

\n\nPressanykeytoexit!

*Thisistheend*\n"

*oftheprogramwhich*\n"

*cancalculatetheBP*\n"

*Thanksforusing!

exit(0);

GetTrainingData()/*OK*/

{intm,i,j;

intdatr;

if((fp=fopen("

f:

\\bp\\训练样本.txt"

"

r"

))==NULL)/*

读取训练样本*/

Cannotopenfilestrikeanykeyexit!

"

exit

(1);

m=0;

i=0;

j=0;

while(fscanf(fp,"

%d"

&

datr)!

=EOF)

{j++;

if(j<

=(N*IN))

{if(i{

Study_Data[m].input[i]=datr;

/*printf("

\ntheStudy_Datat[%d].input[%d]=%

f\n"

m,i,Study_Data[m].input[i]);

*//*usetocheck

theloadedtrainingdatas*/

if(m==(N-1)&

&

i==(IN-1))

i=-1;

if(i==(IN-1))

m++;

elseif((N*IN){if(i{Study_Data[m].teach[i]

=datr;

\nTheStudy_Data[%d].teach[%d]=%

f"

m,i,Study_Data[m].teach[i]);

i==(ON-1))

if(i==(ON-1))

{m++;

i++;

fclose(fp);

\nThereare[%d]datatsthathavebeenloaded

successfully!

j);

/*showthedatawhichhasbeenloaded!

*/

\nShowthedatawhichhasbeenloadedas

follows:

for(m=0;

m{for(i=0;

i{printf("

\nStudy_Data[%d].input[%d]

=%f"

for(j=0;

j{printf("

\nStudy_Data[%d].teach[%d]=%

m,j,Study_Data[m].teach[j]);

\n\nPressanykeytostartcalculating..."

return1;

/*///////////////////////////////////*/

/*初始化权、阈值子程序*/

initial()

{inti;

intii;

intj;

intjj;

intk;

intkk;

/*隐层权、阈值初始化*/

for(i=0;

i{

for(j=1;

j{W[i][j]=(double)((rand()/32767.0)*2-1);

/*初

始化输入层到隐层的权值,随机模拟0和1-1*/

w[%d][%d]=%f\n"

i,j,W[i][j]);

for(ii=0;

ii{

for(jj=0;

jj{V[ii][jj]=(double)((rand()/32767.0)*2-1);

/*初始化隐层到输出层的权值,随机模拟0和1-1*/

V[%d][%d]=%f\n"

ii,jj,V[ii][jj]);

for(k=0;

k{

YU_HN[k]=(double)((rand()/32767.0)*2-1);

/*隐层阈值初

始化,-0.01~0.01之间*/

YU_HN[%d]=%f\n"

k,YU_HN[k]);

for(kk=0;

kk{

YU_ON[kk]=(double)((rand()/32767.0)*2-1);

/*输出层阈值

初始化,-0.01~0.01之间*/

}/*子程序initial()结束*/

/*//////////////////////////////////////////*/

/*第m个学习样本输入子程序*/

/*/////////////////////////////////////////*/

input_P(intm)

{inti,j;

i{P[i]=Study_Data[m].input[i];

P[%d]=%f\n"

i,P[i]);

/*获得第m个样本的数据*/

}/*子程序input_P(m)结束*/

/*第m个样本教师信号子程序*/

input_T(intm)

{intk;

kT[k]=Study_Data[m].teach[k];

}/*子程序input_T(m)结束*/

H_I_O()

doublesigma;

inti,j;

j{

sigm