实验3-MapReduce编程初级实践_精品文档Word格式文档下载.docx

《实验3-MapReduce编程初级实践_精品文档Word格式文档下载.docx》由会员分享,可在线阅读,更多相关《实验3-MapReduce编程初级实践_精品文档Word格式文档下载.docx(7页珍藏版)》请在冰豆网上搜索。

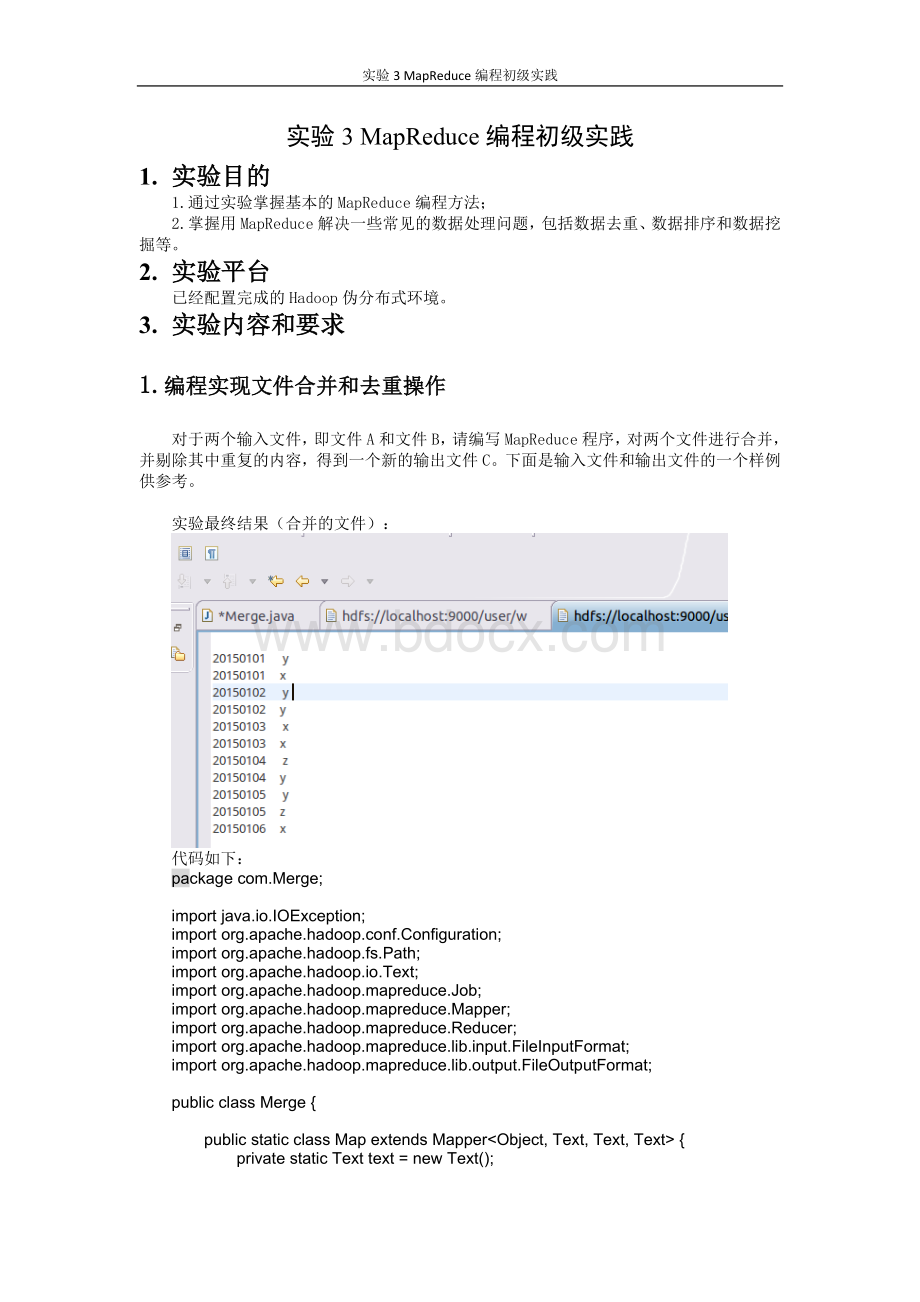

代码如下:

packagecom.Merge;

importjava.io.IOException;

importorg.apache.hadoop.conf.Configuration;

importorg.apache.hadoop.fs.Path;

importorg.apache.hadoop.io.Text;

importorg.apache.hadoop.mapreduce.Job;

importorg.apache.hadoop.mapreduce.Mapper;

importorg.apache.hadoop.mapreduce.Reducer;

importorg.apache.hadoop.mapreduce.lib.input.FileInputFormat;

importorg.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

publicclassMerge{

publicstaticclassMapextendsMapper<

Object,Text,Text,Text>

{

privatestaticTexttext=newText();

publicvoidmap(Objectkey,Textvalue,Contextcontext)

throwsIOException,InterruptedException{

text=value;

context.write(text,newText("

"

));

}

}

publicstaticclassReduceextendsReducer<

Text,Text,Text,Text>

publicvoidreduce(Textkey,Iterable<

Text>

values,Contextcontext)

context.write(key,newText("

publicstaticvoidmain(String[]args)throwsException{

Configurationconf=newConfiguration();

conf.set("

fs.defaultFS"

"

hdfs:

//localhost:

9000"

);

String[]otherArgs=newString[]{"

input"

output"

};

if(otherArgs.length!

=2){

System.err.println("

Usage:

Mergeandduplicateremoval<

in>

<

out>

System.exit

(2);

Jobjob=Job.getInstance(conf,"

Mergeandduplicateremoval"

job.setJarByClass(Merge.class);

job.setMapperClass(Map.class);

job.setReducerClass(Reduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

FileInputFormat.addInputPath(job,newPath(otherArgs[0]));

FileOutputFormat.setOutputPath(job,newPath(otherArgs[1]));

System.exit(job.waitForCompletion(true)?

0:

1);

}

2.编写程序实现对输入文件的排序

现在有多个输入文件,每个文件中的每行内容均为一个整数。

要求读取所有文件中的整数,进行升序排序后,输出到一个新的文件中,输出的数据格式为每行两个整数,第一个数字为第二个整数的排序位次,第二个整数为原待排列的整数。

实验结果截图:

packagecom.MergeSort;

importorg.apache.hadoop.io.IntWritable;

publicclassMergeSort{

publicstaticclassMapextends

Mapper<

Object,Text,IntWritable,IntWritable>

privatestaticIntWritabledata=newIntWritable();

Stringline=value.toString();

data.set(Integer.parseInt(line));

context.write(data,newIntWritable

(1));

publicstaticclassReduceextends

Reducer<

IntWritable,IntWritable,IntWritable,IntWritable>

privatestaticIntWritablelinenum=newIntWritable

(1);

publicvoidreduce(IntWritablekey,Iterable<

IntWritable>

values,

Contextcontext)throwsIOException,InterruptedException{

for(IntWritableval:

values){

context.write(linenum,key);

linenum=newIntWritable(linenum.get()+1);

}

input2"

output2"

/*直接设置输入参数*/

mergesort<

mergesort"

job.setJarByClass(MergeSort.class);

job.setOutputKeyClass(IntWritable.class);

job.setOutputValueClass(IntWritable.class);

3.对给定的表格进行信息挖掘

下面给出一个child-parent的表格,要求挖掘其中的父子辈关系,给出祖孙辈关系的表格。

实验最后结果截图如下:

packagecom.join;

importjava.util.*;

importorg.apache.