数学建模竞赛蠓虫分类.docx

《数学建模竞赛蠓虫分类.docx》由会员分享,可在线阅读,更多相关《数学建模竞赛蠓虫分类.docx(8页珍藏版)》请在冰豆网上搜索。

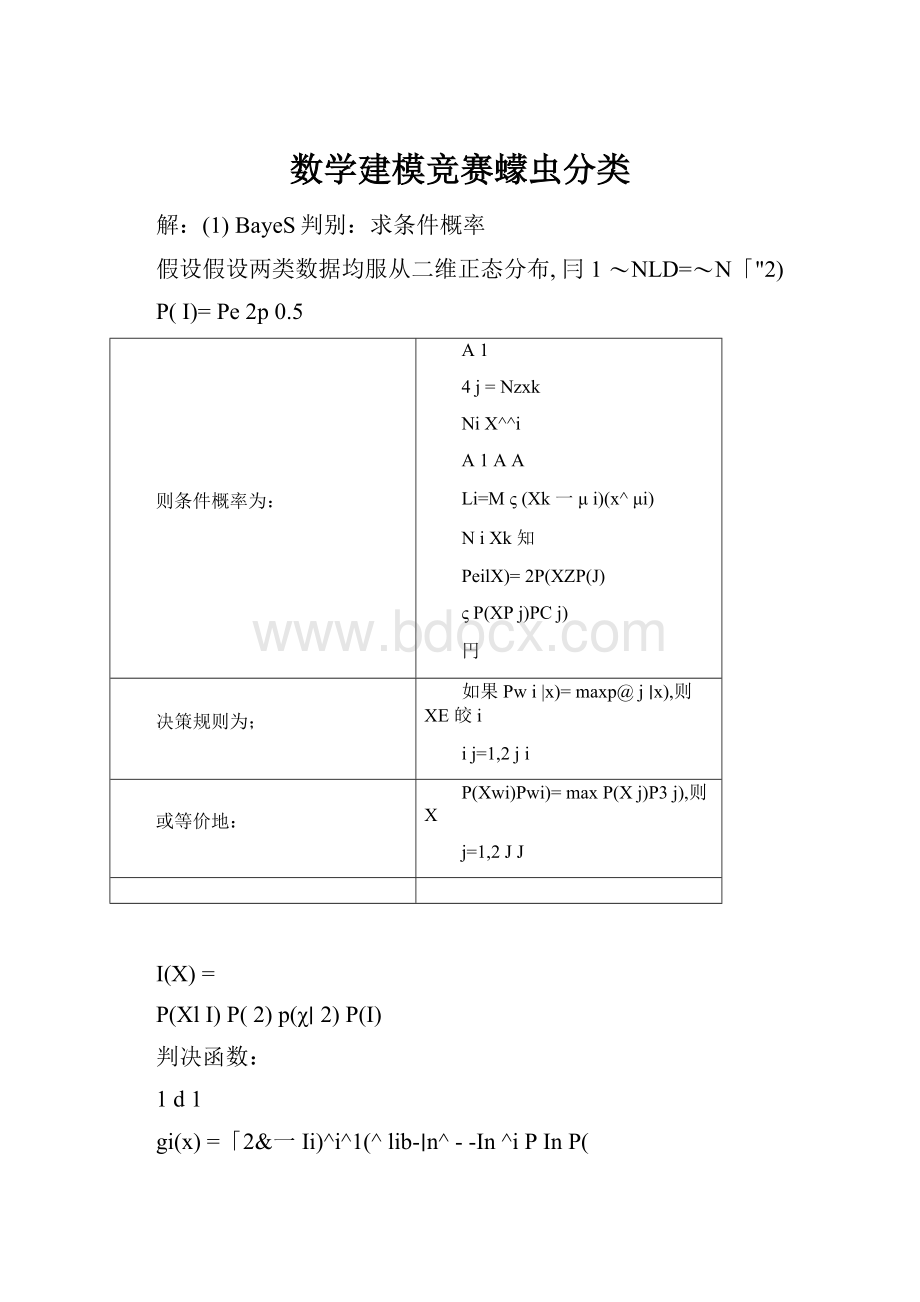

数学建模竞赛蠓虫分类

解:

(1)BayeS判别:

求条件概率

假设假设两类数据均服从二维正态分布,冃1〜NLD=〜N「"2)

P(I)=Pe2p0.5

则条件概率为:

A1

4j=Nzxk

NiX^^i

A1AA

Li=Mς(Xk一μi)(x^μi)

NiXk知

PeilX)=2P(XZP(J)

ςP(XPj)PCj)

円

决策规则为;

如果Pwi|x)=maxp@j∣x),则XE皎i

ij=1,2ji

或等价地:

P(Xwi)Pwi)=maxP(Xj)P3j),则X

j=1,2JJ

I(X)=

P(XlI)P

(2)p(χ∣2)P(I)

判决函数:

1d1

gi(x)=「2&一Ii)^i^1(^lib-∣n^--In^iPInP(

决策面方程为gi(χ)=Igj(X)即

冷[(x-S)=(x」i)-(x-Jj)^rI(^lj)ΓInp^)

类似地,BayeS最小风险判别可通过给出风险后得到。

x=[1.201.301.181.141.261.281.361.48

1.40

1.381.241.38

1.54

1.38

1.56

1.24

1.28

1.401.221.36;

1.861.961.781.78

2.00

2.00

1.74

1.82

1.70

1.901.721.64

1.82

1.82

2.08

1.80

1.782.041.881.78];

n1=6;n2=9;n3=5;

plot(x(1,1:

n1),x(2,1:

n1),'o',x(1,n1+1:

n1+n2),x(2,n1+1:

n1+n2),'*',x(1,n1+n2+1:

end),x(2,n1+n2+1:

end),'r+');

mm1=sum(y(1:

n1))/n1;

mm2=sum(y(n1+1:

n1+n2))/n2;

sgm1=cov(x(:

1:

n1)');%=s1/(n1-1);

sgm2=cov(x(:

n1+1:

n1+n2)');

X=x(:

n1+n2+1:

end);

X=X';

pxw1=mvnpdf(X,m1',sgm1);

pxw2=mvnpdf(X,m2',sgm2);

pwx1=pxw1./(pxw1+pxw2);

pwx2=pxw2./(pxw1+pxw2);

display('UsingBayesprincipalis:

')

Apf=find(pwx1>pwx2)+n1+n2,

(2)FiSher判别:

求投影方向W

圉4.3FiSher线性判别的基本原理

准则函数:

其中

最优解:

JF(W)=

(ff∣1-r~2)2

S1S2

mi

1

Ni

WTSbW

WTsW

mi

NiyYi

y,

(x-mj(x-mj)τ,i=1,2

S八

XWyi

S=SIs2

Si

S.

Yn

if

X(y-mi)2,

Y-Yi

SiS2

S1(m1m2)

(1)

Yo

m1m2

2

NlmIN2ιτi2

N1N2

(3)

Yo

_m1m2In(P

2N

m1=mean(x(:

1:

n1),2);m2=mean(x(:

n1+1:

n1+n2),2);s1=(x(:

1:

n1)-repmat(m1,1,n1))*(x(:

1:

n1)-repmat(m1,1,n1))';

s2=(x(:

n1+1:

n1+n2)-repmat(m2,1,n2))*(x(:

n1+1:

n1+n2)-repmat(m2,1,n2))';

S=s1+s2;w=inv(S)*(m1-m2);y=w'*x;

mm1=sum(y(1:

n1))/n1;mm2=sum(y(n1+1:

n1+n2))/n2;y0=(mm1+mm2)/2;

%y0=(mm1*n1+mm2*n2)/(n1+n2);dpyb=y(n1+n2+1:

end);

display('Usingfisherprincipalis:

')Apf=find(dpyb>y0)+n1+n2,figure

(2);

t=1.1:

0.01:

1.6;

kkk=-w

(1)∕w

(2);

ft=kkk*t+yθ∕w

(2);

PIot(X(1,1:

n1),x(2,1:

n1),'o',x(1,n1+1:

n1+n2),x(2,n1+1:

n1+n2),'*',x(1,n1+n2+1:

end),x(2,n1+n2+1:

end),'r+',t,ft);

axis([1.1,1.6,1.621]);

感知器准则及梯度下降算法:

TynO,nT,2,,N

(b)规范化

(a)耒规范化

图4.5

解区和解向堆示意图

梯度下降法:

批处理感知器算法

bigin

retUrn

end

initioaliz

do

Unt

il

固定增量单样本感知器

1.

bigin

initioaliz

:

ea,

2

do

k∙k

3

k

ify

被a错

4

—Until

所有模

5

return

a

6

end

%peceptron

x1=[ones(1,length(x));x];

x1(:

n1+1:

n1+n2)=-x1(:

n1+1:

n1+n2);

epsl=0.1;

a=1-2*rand(3,1);

k=0;

whilek<100000

k=k+1;

y=x1(:

rem(k,n1+n2)+1);

ifa'*ya=a+y;

end

end

y1=a'*x1(:

1:

n1+n2);

ind=find(y1<=0);

display('theSamPIeSforfirstclassUSing

PeCePtrOnPrinCiPaIis:

')

Apf=find(a'*x1(:

n1+n2+1:

end)>0)

Pt=-a

(2)*"a(3)-a

(1)∕a(3);

figure(3),

PlOt(X(1,1:

n1),x(2,1:

n1),'o',x(1,n1+1:

n1+n2),x(2,n

1+1:

n1+n2),'*',x(1,n1+n2+1:

end),x(2,n1+n2+1:

end),'r+',t,[pt,ft]);

最小平方误差准则:

设

Ya=

b

y1T

y11

II

y12

y1

T

Y=∣y2

I:

I

11

IIy21

I=I...

y22

%

…I

-yN

11

ILyNI

yN2

IyN(?

bNC

N

目标:

minJs(a)=IleP=|Ya-b∣∣2=瓦(aτyn-bn)2(463)

N

7s(a)八2(aTy^bn)y^2Yt(Ya-b)(A-64)

n=1

n=1

目标函数的梯度:

VJs(aPO^=>YTYa=YTb(4-65)

Aa=(YTY)1YTb=Yb(4

%MSE

b=ones(n1+n2,1);

Y=x1(:

1:

n1+n2);

Y=Y';

Yplus=(Y'*Y)^(-1)*Y';ahat=Yplus*b;mt=-ahat

(2)*t/ahat(3)-ahat

(1)/ahat(3);

figure(4)

plot(t,[pt;ft;mt],x(1,1:

n1),x(2,1:

n1),'o',x(1,n1+1:

n1+n2),x(2,n1+1:

n1+n2),'*',x(1,n1+n2+1:

end),x(2,n1+n2+1:

end),'r+');

axis([1.1,1.6,1.6,2.1]);display('UsingMSEprincipalis:

')Apf=find(ahat'*x1(:

n1+n2+1:

end)>0)+n1+n2,