大连理工大学优化方法上机作业Word格式.docx

《大连理工大学优化方法上机作业Word格式.docx》由会员分享,可在线阅读,更多相关《大连理工大学优化方法上机作业Word格式.docx(11页珍藏版)》请在冰豆网上搜索。

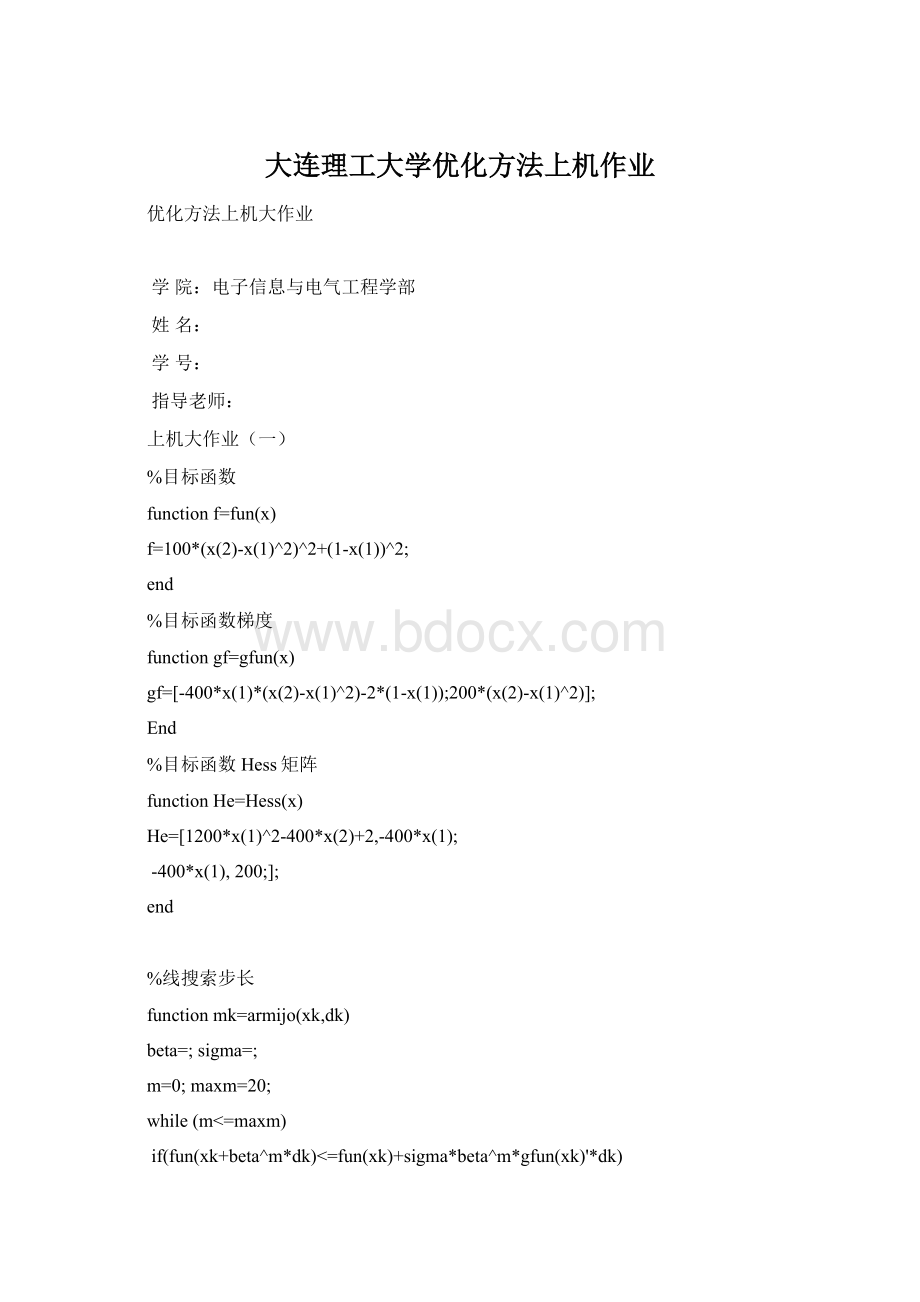

while(m<

=maxm)

if(fun(xk+beta^m*dk)<

=fun(xk)+sigma*beta^m*gfun(xk)'

*dk)

mk=m;

break;

end

m=m+1;

alpha=beta^mk

newxk=xk+alpha*dk

fk=fun(xk)

newfk=fun(newxk)

%最速下降法

function[k,x,val]=grad(fun,gfun,x0,epsilon)

%功能:

梯度法求解无约束优化问题:

minf(x)

%输入:

fun,gfun分别是目标函数及其梯度,x0是初始点,

%epsilon为容许误差

%输出:

k是迭代次数,x,val分别是近似最优点和最优值

maxk=5000;

%最大迭代次数

k=0;

while(k<

maxk)

gk=feval(gfun,x0);

%计算梯度

dk=-gk;

%计算搜索方向

if(norm(gk)<

epsilon),break;

end%检验终止准则

m=0;

mk=0;

while(m<

20)%用Armijo搜索步长

if(feval(fun,x0+beta^m*dk)<

=feval(fun,x0)+sigma*beta^m*gk'

break;

x0=x0+beta^mk*dk;

k=k+1;

x=x0;

val=feval(fun,x0);

>

x0=[0;

0];

[k,x,val]=grad('

fun'

'

gfun'

x0,1e-4)

迭代次数:

k=

1033

x=

val=

%牛顿法

x0=[0;

ep=1e-4;

maxk=10;

gk=gfun(x0);

ep)

x=x0

miny=fun(x)

k0=k

else

H=inv(Hess(x0));

x0=x0-H*gk;

miny=

迭代次数

k0=

2

%BFGS方法

function[k,x,val]=bfgs(fun,gfun,x0,varargin)

N=1000;

epsilon=1e-4;

sigma=;

n=length(x0);

Bk=eye(n);

N)

gk=feval(gfun,x0,varargin{:

});

dk=-Bk\gk;

20)

newf=feval(fun,x0+beta^m*dk,varargin{:

oldf=feval(fun,x0,varargin{:

if(newf<

=oldf+sigma*beta^m*gk'

x=x0+beta^mk*dk;

sk=x-x0;

yk=feval(gfun,x,varargin{:

})-gk;

if(yk'

*sk>

0)

Bk=Bk-(Bk*sk*sk'

*Bk)/(sk'

*Bk*sk)+(yk*yk'

)/(yk'

*sk);

x0=x;

val=feval(fun,x0,varargin{:

[k,x,val]=bfgs('

x0)

20

%共轭梯度法

function[k,x,val]=frcg(fun,gfun,x0,epsilon,N)

ifnargin<

5,N=1000;

4,epsilon=1e-4;

itern=k-(n+1)*floor(k/(n+1));

itern=itern+1;

if(itern==1)

betak=(gk'

*gk)/(g0'

*g0);

dk=-gk+betak*d0;

gd=gk'

*dk;

if(gd>

=0),dk=-gk;

epsilon),break;

x=x0+beta^m*dk;

g0=gk;

d0=dk;

k=k+1;

val=feval(fun,x);

[k,x,val]=frcg('

x0,1e-4,1000)

122

上机大作业

(二)

functionf_x=fun(x)

f_x=4*x

(1)-x

(2)^2-12;

%等式约束条件

functionhe=hf(x)

he=25-x

(1)^2-x

(2)^2;

%不等式约束条件

functiongi_x=gi(x,i)

switchi

case1

gi_x=10*x

(1)-x

(1)^2+10*x

(2)-x

(2)^2-34;

case2

gi_x=x

(1);

case3

gi_x=x

(2);

otherwise

%求目标函数的梯度

functionL_grad=grad(x,lambda,cigma)

d_f=[4;

2*x

(2)];

d_g(:

1)=[-2*x

(1);

-2*x

(2)];

2)=[10-2*x

(1);

10-2*x

(2)];

3)=[1;

4)=[0;

1];

L_grad=d_f+(lambda

(1)+cigma*hf(x))*d_g(:

1);

fori=1:

3

iflambda(i+1)+cigma*gi(x,i)<

L_grad=L_grad+(lambda(i+1)+cigma*gi(x,i))*d_g(:

i+1);

continue

%增广拉格朗日函数

functionLA=lag(x,lambda,cee)

LA=fun(x)+lambda

(1)*hf(x)+*cee*hf(x)^2;

LA=LA+1/(2*cee)*(min(0,lambda(i+1)+cee*gi(x,i))^2-lambda(i+1)^2);

functionxk=BFGS(x0,eps,lambda,cigma)

gk=grad(x0,lambda,cigma);

res_B=norm(gk);

k_B=0;

a_=1e-4;

rho=;

c=1e-4;

length_x=length(x0);

I=eye(length_x);

Hk=I;

whileres_B>

eps&

&

k_B<

=10000

dk=-Hk*gk;

whilem<

=5000

iflag(x0+a_*rho^m*dk,lambda,cigma)-lag(x0,lambda,cigma)<

=c*a_*rho^m*gk'

*dk

ak=a_*rho^mk;

xk=x0+ak*dk;

delta=xk-x0;

y=grad(xk,lambda,cigma)-gk;

Hk=(I-(delta*y'

)/(delta'

*y))*Hk*(I-(y*delta'

*y))+(delta*delta'

*y);

k_B=k_B+1;

x0=xk;

gk=y+gk;

%增广拉格朗日法

functionval_min=ALM(x0,eps)

lambda=zeros(4,1);

cigma=5;

alpha=10;

k=1;

res=[abs(hf(x0)),0,0,0];

res(1,i+1)=norm(min(gi(x0,i),-lambda(i+1)/cigma));

res=max(res);

whileres>

k<

1000

xk=BFGS(x0,eps,lambda,cigma);

lambda

(1)=lambda

(1)+cigma*hf(xk);

lambda(i+1)=lambda(i+1)+min(0,lambda(i+1)+gi(x0,1));

cigma=alpha*cigma;

res=[norm(hf(x0)),0,0,0];

val_min=fun(xk);

fprintf('

k=%d\n'

k);

fmin=%.4f\n'

val_min);

x=[%.4f;

%.4f]\n'

xk

(1),xk

(2));

val_min=ALM(x0,1e-4)

k=10

fmin=

x=[;

]

val_min=

上机大作业(三)

A=[11;

-10;

0-1];

n=2;

b=[1;

0;

G=[0;

02];

c=[24];

cvx_solversdpt3

cvx_begin

variablex(n)

minimize(x'

*G*x-c*x)

subjectto

A*x<

=b

cvx_end

disp(x)

Status:

Solved

Optimalvalue(cvx_optval):

A=[211;

123;

221;

-100;

0-10;

00-1];

n=3;

b=[2;

5;

6;

C=[-3-1-3];

minimize(C*x)