南航暑期国际课程大数据可视化课程大作业总结与试验报告.docx

《南航暑期国际课程大数据可视化课程大作业总结与试验报告.docx》由会员分享,可在线阅读,更多相关《南航暑期国际课程大数据可视化课程大作业总结与试验报告.docx(12页珍藏版)》请在冰豆网上搜索。

南航暑期国际课程大数据可视化课程大作业总结与试验报告

Reportofthecourse

Øtheoreticalsummary

Ihavelearnedsomerelevantknowledgeaboutbigdata,bigdatavisualizationandminingfromthesummercoursewhichisteachedbyProfessorBorisKovalerchuk.Thiscoursedeepensmyunderstandingaboutbigdatavisualizationandminingandbroadensmyeyes.

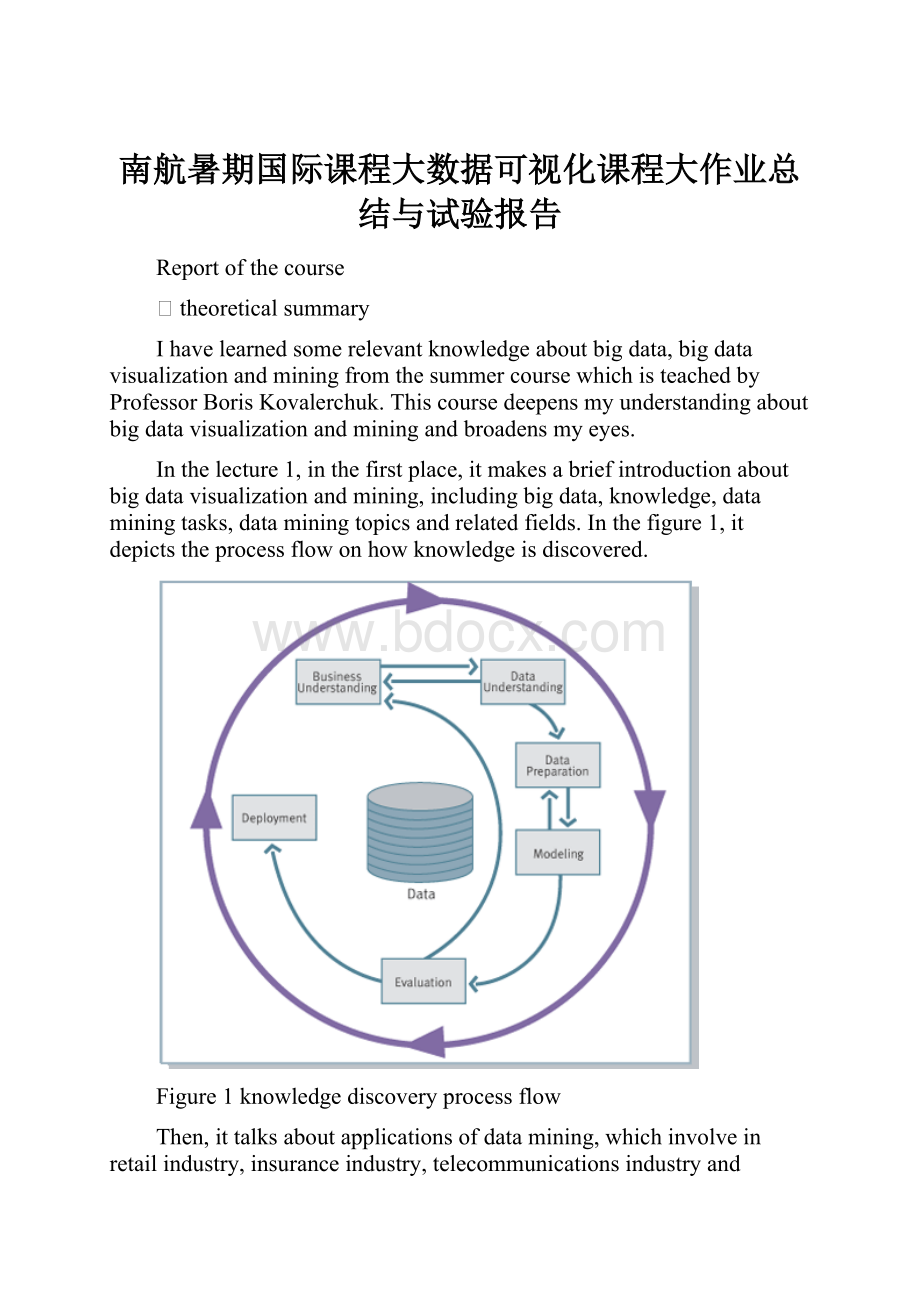

Inthelecture1,inthefirstplace,itmakesabriefintroductionaboutbigdatavisualizationandmining,includingbigdata,knowledge,dataminingtasks,dataminingtopicsandrelatedfields.Inthefigure1,itdepictstheprocessflowonhowknowledgeisdiscovered.

Figure1knowledgediscoveryprocessflow

Then,ittalksaboutapplicationsofdatamining,whichinvolveinretailindustry,insuranceindustry,telecommunicationsindustryandmanufacturingindustry.Italsodealswiththerulesofdatamining,suchasfirst-orderlogicrules.

Inthesecondplace,inthelecture2,Ilearnedsomeconceptsaboutdatamininganddifferencesbetweendataminingandinformationretrieval.Then,Iknewtherelationshipofdatamininganddatabases.Inthefigure2,itdescribessomeinformationaboutdatabasesanddatamining.

Figure2databasesanddatamining

Afterwards,itintroducesregressionmodelstous,especiallylinearregressionequation,asfollow:

(1)

Besides,multidimensionallinearregressionequationasfollow:

(2)

Atthesametime,Ilearnedtrainingandtestingdataanddecisiontrees.Inthelecture3,itmainlytalksaboutconfusionmatrixandROCcurve.Aconfusionmatrixisavisualizationtooltypicallyusedinsupervisedlearning,whichcontainsinformationaboutactualandpredictedclassificationsdonebyaclassificationsystem.ROCcurveisanotherwaybesidesconfusionmatricestoexaminetheperformanceofclassifiers.Then,itdoeswithTanagradataminingsoftwareandhowtousethesoftware.

Inthelecture4,ittalksabouttheclassificationofdata,whichhasthreeclassificationmethods,namelylineardiscrimination,neuralnetworkanddecisionstree,asfollow:

Figure3lineardiscrimination

Figure4neuralnetwork

Figure5decisionstree

Amongthem,decisionstreeistheimportantpart,whichdealswithchoosingthesplittingattribute,giniindexandinformationgainandgainratio.Theginiindexofthesplitdataisdefinedas

(3)

Gainratioisamodificationoftheinformationgainthatreducesitsbiasonhigh-branchattributes.Itisdefinedas

(4)

Meanwhile,parallelcoordinatetechniquesandvisualizationarediscussedinthelecture.Wecanrepresentdatain1,2,and3-D,evenin4+dimensions.Thesetwopicturesareexamplesofvisualization:

oneisbadvisualization,theotherisbettervisualization.

Figure6badvisualization

Figure7bettervisualization

Inthelecture5,atfirst,itintroducesvisualdataminingsoftwaretous,namelyLotsofLines.Then,ittalksaboutvisualizationofmultidimensionaldatawithcollocatedpairedcoordinatesandgenerallinecoordinates.

Inthethirdplace,inthelecture6,Ilearnedmachinelearning,includingsupervisedmachinelearningandunsupervisedmachinelearning.AndIlearnedthemethodofdata-visualizationandcomparingvisualization.Then,ittalksaboutclusteringandthemethodofclusteringwhichinvolvesinK-meansclusteringandHierarchicalclustering.IttellsusK-meansclusteringindetail.

Inthelecture7,itintroducescollocatedcoordinatestous.Inthelecture8,itmainlytalksaboutcollaborativelosslessvisualization.Inaddition,itdealswithcollaborativeapproachtoenhancevisualizationandadvantagesoflosslessvisualization.Then,itdemonstratesadvantagesofnewlosslessvisualizationbasedontheideaofcollocatingpairsofthecoordinates.ItstatesapplicationsofCPCstarsanddensepixeldisplays.

Finally,inthelecture9,ittalksaboutenvelopesofvisualdatamining.Inthelecture10,theprofessorgaverationalanswersintermsofquestionsthatstudentsputforward.Throughthecourse,Ihaveapreliminaryknowledgeofdatavisualizationandmining.

Øexperimentalreport

1atwo-classconfusionmatrix

(1)total15datarecords:

n

H

W=x

H-100=y

e=x-y

cm

kg

kg

1

171

74

71

3

2

176

62

76

-14

3

181

85

81

4

4

159

50

59

-9

5

167

50

67

-17

6

158

60

58

2

7

160

62

60

2

8

170

56

70

-14

9

178

85

78

7

10

170

55

70

-15

11

177

64

77

-13

12

184

76

84

-8

13

176

82

76

6

14

176

70

76

-6

15

176

71

76

-5

(2)confusionmatrix

Predicted

Negative

Positive

Actual

Negative

7

2

Positive

1

5

(3)computingresults

(1)

FP=

0.222

(2)

FN=

0.167

(3)

TP=

0.833

(4)

TN=

0.778

(5)

AC=

0.800

(6)

P=

0.714

2dataminingbyusingTanagrasoftware

(1)experimentaldata

Outlook

Temp

Humidity

Windy

Class

sunny

75

70

yes

Play

sunny

80

90

yes

DontPlay

sunny

85

85

no

DontPlay

sunny

72

95

no

DontPlay

sunny

69

70

no

Play

overcast

72

90

yes

Play

overcast

83

78

no

Play

overcast

64

65

yes

Play

overcast

81

75

no

Play

rain

71

80

yes

DontPlay

rain

65

70

yes

DontPlay

rain

75

80

no

Play

rain

68

80

no

Play

rain

70

96

no

Play

(2)horizontalaxisisHumidity,verticalaxisisTemp,legendisAttributevalue,attributesareOutlook,WindyandClass.Itsresultsofdataminingarethefollowingfigures.

Figure8itsattributeisOutlook

Figure9itsattributeisWindy

Figure10itsattributeisClass

(3)otherresultsarethefollowingfigures.

Figure11Windy’sdistribution

Figure12Outlook’sdistribution

Figure13regressionscharacteristics

3visualdataminingbyusingLotsofLinessoftware

(1)experimentaldata

Outlook

Temp

Humidity

Windy

Class

1

75

70

1

DontPlay

1

80

90

0

DontPlay

1

85

85

0

DontPlay

3

72

95

1

DontPlay

3

69

70

1

DontPlay

1

72

90

1

Play

1

83

78

0

Play

2

64

65

1

Play

2

81

75

0

Play

2

71

80

1

Play

2

65

70

0

Play

3

75

80

0

Play

3

68

80

0

Play

3

70

96

0

Play

(2)theresultsofdatamining

Figure14graphicsinfourcoordinates

Figure15graphicswithdatatable